If you have spent anytime with SSIS you have heard that Asynchronous components also known as “Blocking” components are bad for performance and should be avoided where possible. You have also heard some analogy or another that explained how these components “block” the data in the data flow and slow down performance. But I’m here to tell you that it gets much worse.

The data flow sends data down in buffers. Each buffer in the data flow will first utilize memory if available, the memory that is captured for that buffer of records will be released once the data flow is done with that buffer. For example once those records in that buffer have been loaded to the destination the buffer and the corresponding memory is released. This memory can now be used for the next buffer of memory coming down the pipeline in the data flow task. This is where the true evil with asynchronous components come in.

What happens if the buffer is not released because it got caught up in the data flow at some blocking transform? In a worse case scenario your machine/server becomes starved for memory and then SSIS is forced use disk instead of memory. Too many buffers spooled to disk can exponentially increase your package run time. Let me demonstrate.

For this example I have created two packages. Each package uses a sorting transform, however in different locations.

Package One. This package takes an unknown amount of time to run, I cut it off after approximately 68 minutes. The first screenshot shows the package in it’s current state, the second and third screenshot give some insight into why this is taking so long to run.

Screenshot 1:

The following screenshot is obtained from SSMS after I run the package from integration services and then run the following query against the SSISDB.

- “SELECT * FROM [catalog].[dm_execution_performance_counters] (NULL) “

Screenshot 2:

Screenshot 3: (Here we see the execution performance from the SSISDB)

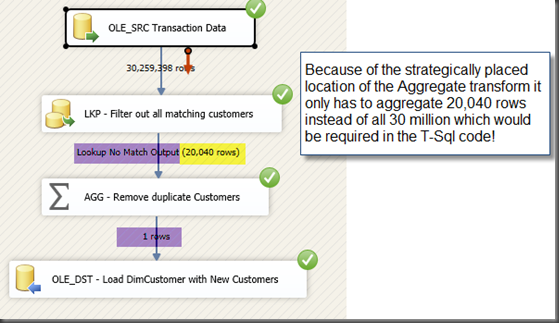

Unfortunately there are times when you just have no choice and must use asynchronous components in SSIS. One option to consider, can the blocking transform be placed in a more strategic location to minimize the negative impact to performance? For example. In package one the sorting transform was placed before the lookup, the lookup is only forwarding non matching records to the destination, so why do the matching records need to be sorted? If matching records don’t need to be sorted then we can move the blocking transform to after the lookup and keep everything in memory.

Package Two: (This package completes in 1 minute and 20 seconds)

Screenshot 1:

Screenshot 2:

Screenshot 3:

Thanks for looking!