In this blog, we are going to review the Copy Data activity. The Copy Data activity can be used to copy data among data stores located on-premises and in the cloud.

In part four of my Azure Data Factory series, I showed you how you could use the If Condition activity to compare the output parameters from two separate activities. We determined that if the last modified date of the file (located in blob storage) was greater than the last load time then the file should be processed. Now we are going to proceed with that processing using the Copy activity. If you are new to this series you can view the previous posts here:

- Check out part one here: Azure Data Factory – Get Metadata Activity

- Check out part two here: Azure Data Factory – Stored Procedure Activity

- Check out part three here: Azure Data Factory – Lookup Activity

- Check out part four here: Azure Data Factory – If Condition Activity

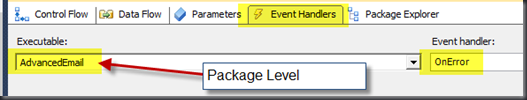

If Condition Activity – If True activities

As mentioned in the intro, we are going to pick up where we left on in the last blog post. Remember that the If Condition activity evaluates an expression and returns either true or false. If the result is true then one set of activities are executed and if the result is false then another set of activities will be executed. In this blog post we are going to focus on the If True activities.

- First, select the If Condition activity and then click on activities from the properties pane, highlighted in yellow in the image below.

- Next, click on the box “Add if True Activity”.

This will launch a new designer window, and this is where we will be configuring our Copy Data activity.

Copy Data Activity, setup and configuration

Fortunately the Copy Data activity is rather simple to set up and configure, this is a good thing since it is likely you will be using this activity quite often. The first thing we need to do is add the activity to our pipeline. Select the Copy Data activity from the Data Transformation category and add it to the pipeline.

Now we need to set up the source and the sink datasets, and then map those datasets. You can think of the sink dataset as the destination for all intents and purposes.

Select the copy data activity and then click on the Source tab found in the properties window. For the dataset I am simply going to choose the same dataset we used in our first blog in this series.

Creating the Sink dataset in Azure Data Factory

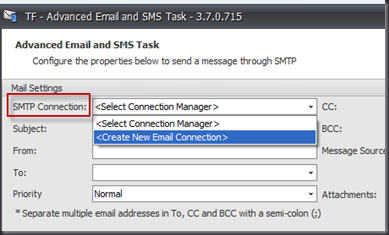

Now it’s time to set up the Sink (destination) dataset. My destination dataset in this scenario is going to be an Azure SQL Database. I am going to click on Sink and then I will click on + New.

When you click on + New, this will launch a new window with all your data store options. I am going to choose Azure SQL Database.

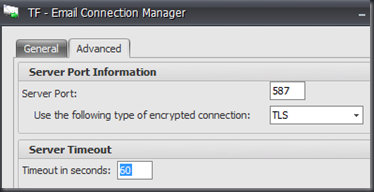

This will launch a new tab in a Azure Data Factory where you can now configure your dataset. In the properties window select “Connection”, here you will select your linked service, this is your connection to your database. If you haven’t already created a linked service to your database then click on + New. Clicking new will open up a new window where you can input the properties for your linked service. Take a look at the screenshot below:

Once the linked service account has been created I will select the table I want to load. All available tables will show up in the drop down list. I will select my dbo.emp table from the drop down.

The final step for the dataset is to import the schema, this will be used when we perform the mapping between the source and sink datasets. Next, I am going to click on the Schema tab. On the Schema tab I will select import schema, this actually returns a column that I don’t need and so I will remove the column from the schema as well. Take a look at steps three and four in the following screenshot:

Copy Data activity – Mapping

Now that the source and sink datasets have been configured, it’s time to finish configuring the Copy Data activity. The final step is to map these two datasets. Now I will navigate back to the pipeline and click on the copy data activity. From the properties window I will select the Mapping tab. Then I will import the schemas, importing schemas will import the schemas from the source and the destination. This step will also attempt to automatically map the source and the destination as well and so it is important to verify that the mapping is correct. Unfortunately my source dataset was a file and it did not have any column headers. Azure Data Factory automatically created the column headers Prop_0 and Prop_1 for my first and last name columns. Take a look at the following screenshot:

This was a simple application of the Copy Data activity, in a future blog post I will show you how to parameterize the datasets to make this process dynamic. Thanks for reading my blog!