Thank you everyone for attending my webinar on SSIS Performance Tuning, if you missed that presentation you can watch it here: http://pragmaticworks.com/LearningCenter/FreeTrainingWebinars/PastWebinars.aspx?ResourceId=683

Below are some of the questions that I received from the Webinar:

Q: Can you give some performance tips in using script tasks too?

A: Yea, don’t use them! Script tasks can be really bad for performance and many times I have been able to replace someone else’s code with native SSIS components. For example, I had situation where the client was using some very complicated .net code to parse out columns based on logic. The package was taking over an hour to process 1 million records. I replaced this with some conditional splits and derived columns and it now runs in 3 minutes.

Q: I am assuming that the file formats must be the same for all files when using the MultiFlatFile transform, correct?

A: You are absolutely correct. The metadata in each file must match.

Q: PW delivers a ‘TF Advanced Lookup Cache Transform” component. What are the benefits of using this component over the Cache Transform covered earlier? It seems that the TF components cannot make use of the same result set when the data set is role based.

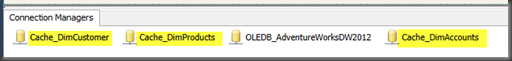

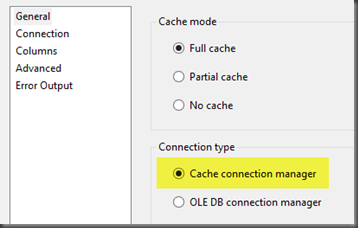

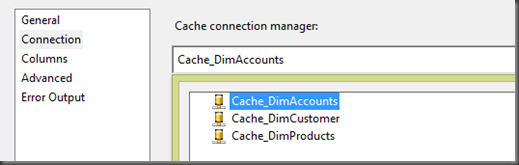

A: For basic caching of data I would use the native SSIS cache transform. The major advantage you get from the Task Factory component is you can do very difficult range lookups with ease and they will perform at a high level. Please see my blog post on this.

Q: What version of SQL Server is being used?

A: I was using SQL 2012, but everything in the presentation is applicable to 2005 and 2008.

Q: With the multi flatfile connection manager can you specify specific types?

A: Yes, the wild card character can be anywhere in the connection string property. So you could do test*.txt to only pull in text files where the file name begins with test.

Q: Why would you ever not use table or view (fast load) option in the OLEDB Destination?

A: Well I personally would always use that option. However, with the fast load option all records are committed for the entire batch. So if there is a record that is bad and causes the failure you will not know which record caused the error. With table or view option each record is committed individually so you know exactly which record caused the failure.

Q: is the volume on?

A: Sorry can you ask that again, I couldn’t hear you….

Thanks again!