With the Retain Same Connection property I was recently able to more than double the performance of my SSIS package for a client. The package was looping over hundreds of thousands of files and logging the file names into a table to be later processed.

Retain Same Connection is a property setting found on connection managers. By default this property is set to false which means that each time the connection manager is used the connection is opened and subsequently closed. However in a situation like mine this can significantly degrade overall performance as the package has to open and close that connection hundreds of thousands of times. In this blog I’m going to set up a very basic example and walk through setting up this connection manager.

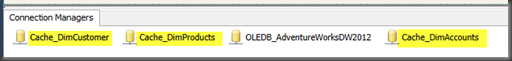

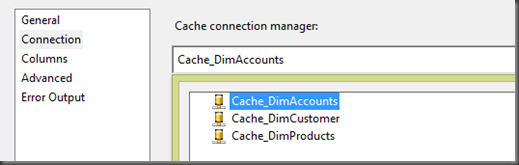

Here I have set up two connection managers. Both connection managers point to the same database. It’s important to note that if you are using Project Level Connection managers in SQL 2012 that setting this property inside any one package will persist across all packages. Therefore I create two connection managers.

Inside the package I am simply using a for each loop task to loop through a list of files in a directory and then I load the file names into a table using an Execute SQL task. For demo purposes I have two examples in one package.

- The FELC on the left takes 30 Seconds to run.

- The FELC on the right takes 11 Seconds to run.

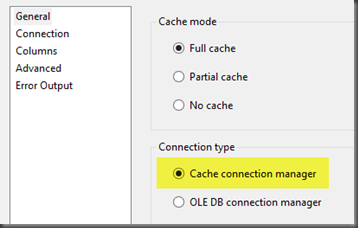

Let’s now discuss how and where we can set this property.

Right click on your connection manager found in the connection managers pain inside the package and select Properties.

Inside the properties window find the property “RetainSameConnection” and set the value to “True”. Now the connection will remain open for the duration of the package.

As always thanks for looking!